Adaptive Bitrate Streaming (HLS vs. DASH): How YouTube Optimizes Video Quality

Introduction

You’re watching a tutorial on your phone during your commute. The train enters a tunnel, your signal drops from 4G to 3G, but the video keeps playing—just slightly blurrier. No buffering, no interruption. When you emerge back into full coverage, the quality ramps back up seamlessly. This isn’t magic; it’s adaptive bitrate streaming (ABR), the backbone of modern video delivery at YouTube, Netflix, and every major platform.

How Adaptive Bitrate Streaming Works

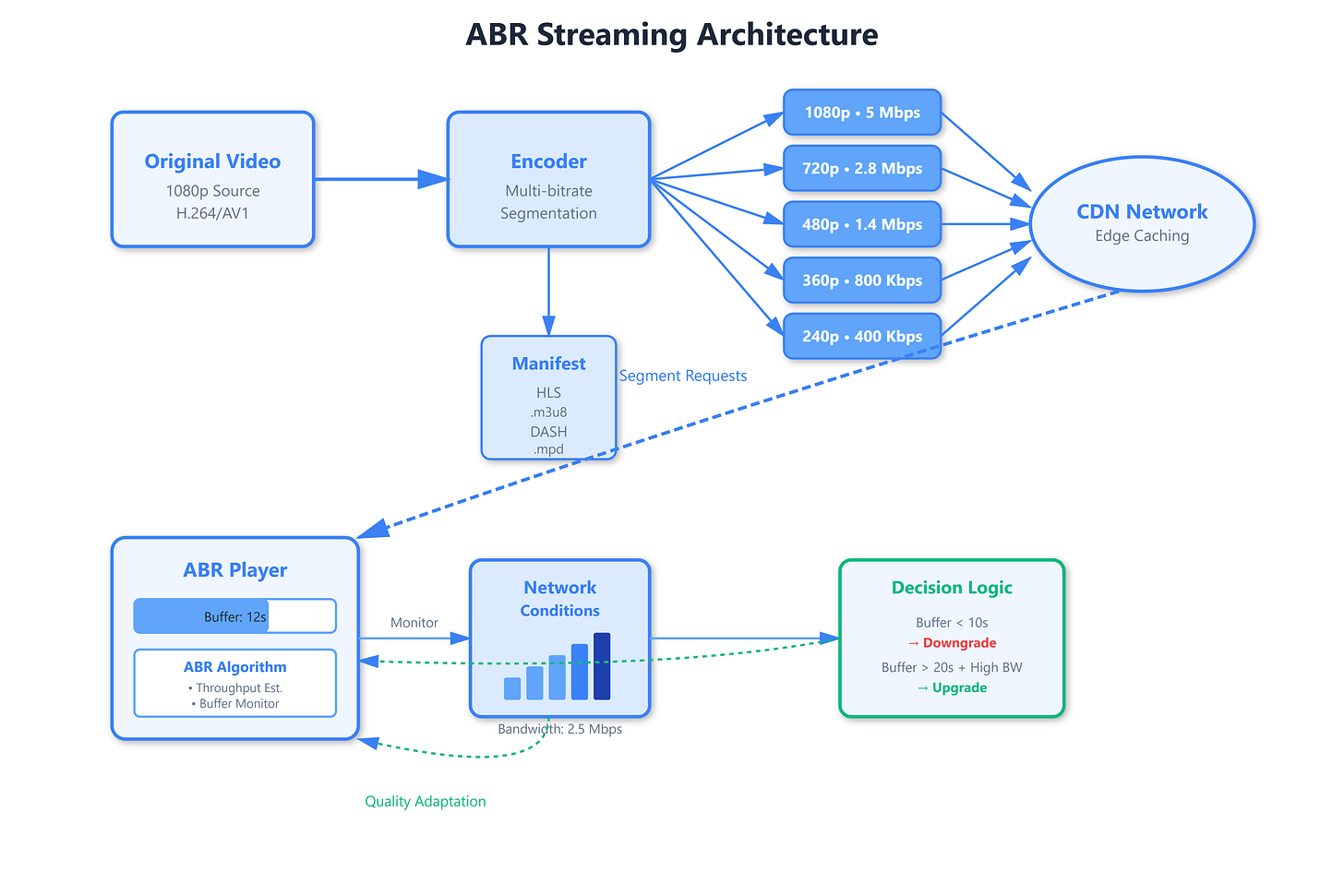

At its core, ABR is deceptively simple: encode the same video at multiple quality levels (240p, 360p, 720p, 1080p, 4K), chop each version into small segments (typically 2-10 seconds), and let the player dynamically choose which quality to fetch based on current network conditions. But the devil lives in the details—buffer management, quality switching algorithms, and segment prefetching strategies separate amateur implementations from production-grade systems.

The player maintains a buffer of downloaded segments (usually 10-30 seconds of video) and constantly monitors two critical metrics: download throughput and buffer fill level. If segments download faster than playback speed and the buffer is healthy, the player requests higher quality segments. If throughput drops or the buffer drains below a threshold (typically 5-10 seconds), it immediately switches to lower bitrates to prevent rebuffering—the dreaded spinning wheel that kills user engagement.

Two protocols dominate this space: HLS (HTTP Live Streaming) by Apple and DASH (Dynamic Adaptive Streaming over HTTP) by MPEG. HLS uses

.m3u8playlist files that list segment URLs, with a master playlist referencing different quality variants. DASH uses XML-based MPD (Media Presentation Description) files serving the same purpose. Both break videos into segments, both support ABR, but they differ in codec flexibility and ecosystem support.