Edge Caching Dynamic Content: Strategies for Reducing Latency for Global Users

When Static Caching Breaks Down

Picture this: Your e-commerce platform serves users globally. A customer in Singapore clicks your homepage. The CDN edge node in Singapore has the HTML cached—lightning fast, right? Wrong. That cached page shows yesterday’s personalized recommendations and the wrong currency. Meanwhile, the origin server in Virginia is hammering your database to regenerate the same product listings for thousands of users. You’re stuck between stale personalized content or punishing latency for every dynamic element.

This is where most caching strategies collapse. Traditional CDN caching assumes content is either fully static or fully dynamic. But modern applications exist in the messy middle—pages with personalized headers, region-specific pricing, user-specific recommendations, but identical product catalogs and navigation. The challenge isn’t whether to cache, but what and how to cache when every request carries unique user context.

The Architecture of Partial Caching

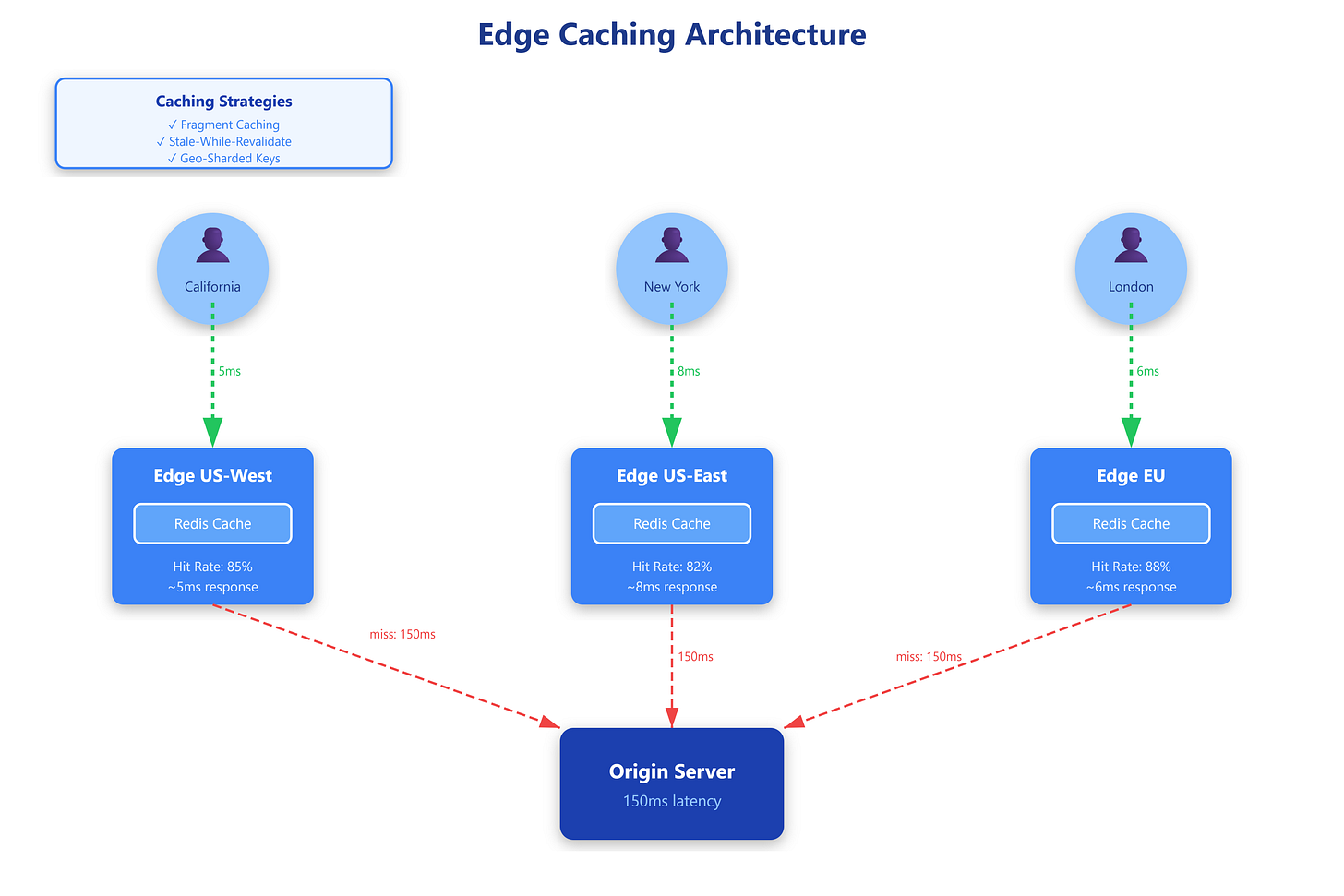

Edge caching for dynamic content relies on content fragmentation—decomposing responses into cacheable and uncacheable parts. Instead of caching entire HTML pages, you cache fragments with different TTLs and compose them at the edge.

Edge Side Includes (ESI) pioneered this approach. Your origin returns HTML with ESI tags: <esi:include src="/personalized-header" />. The edge node caches the skeleton page indefinitely but fetches personalized fragments on each request. Cloudflare Workers and Fastly’s Varnish support ESI natively, letting you serve cached layouts in microseconds while personalizing critical sections.

Fragment caching with cache keys takes this further. Instead of binary cached/uncached decisions, you create composite cache keys:

{content-type}:{region}:{user-tier}. A product page might cache asproduct:123:us:premium, reusing the same rendered HTML for all premium US users. This explodes cache efficiency—a catalog of 10,000 products × 3 regions × 4 user tiers creates 120,000 cache entries, but serves millions of users without hitting the origin.

Stale-while-revalidate (SWR) adds resilience. When cached content expires, the edge serves the stale version immediately while asynchronously refreshing it. Users get instant responses; the cache stays warm. This pattern shines for content where staleness is acceptable—product listings can be 30 seconds old, but the user experience demands sub-100ms latency.

The implementation mechanics matter. Edge nodes maintain layered caches: L1 in-memory (microsecond access), L2 on local SSD (millisecond access), L3 at regional aggregation points. A cache miss cascades through layers before hitting the origin. Lambda@Edge or Workers let you inject custom logic—checking user authentication, rewriting cache keys, or collapsing requests when thundering herds slam the same uncached resource.