Feature Flag Systems

Architecture and Implementation

You deploy a major feature to production at 2 AM. Within minutes, users report errors. Your database is overloaded. Reverting means another 20-minute deployment cycle while your system hemorrhages users. This nightmare scenario is why companies like Netflix, Facebook, and Stripe don’t deploy features—they deploy dark. Feature flags let you separate deployment from release, turning features on and off in milliseconds without touching code.

The Feature Flag Mechanism

A feature flag system is a distributed configuration service that controls code paths at runtime. Instead of branching with if (NEW_FEATURE), you query a service: if (flags.isEnabled('new-checkout')). This seemingly simple change transforms how software reaches users.

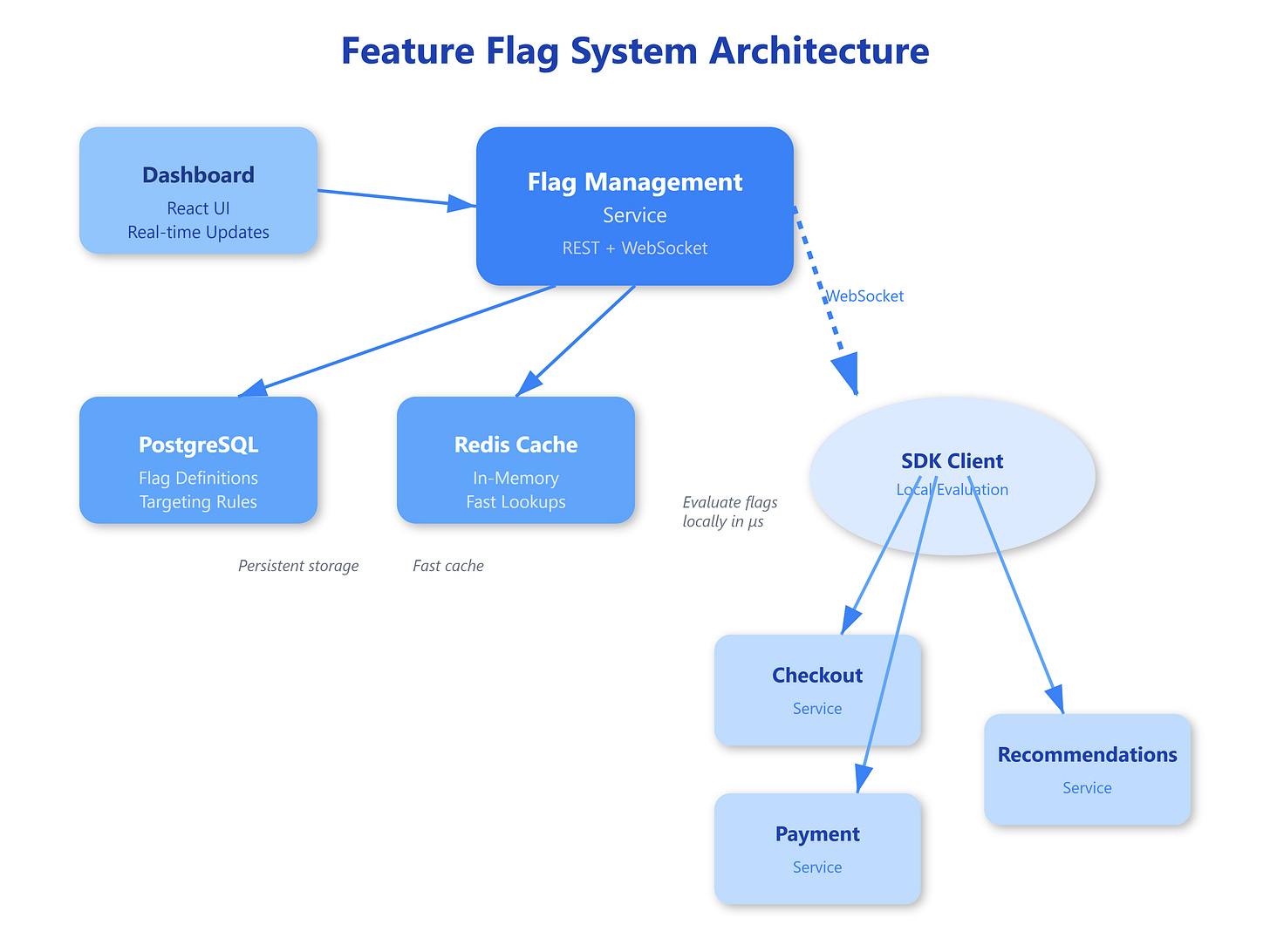

The architecture has three core components: a flag management service storing flag definitions and rules, SDK clients embedded in your applications that evaluate flags locally, and a real-time sync mechanism propagating changes across your fleet in seconds. The management service maintains the source of truth—flag states, targeting rules, and user segments—while SDKs cache this data locally to avoid network calls on every flag check.

Here’s the critical part most engineers miss:

Flag evaluation must be blazingly fast because it happens thousands of times per request. A checkout flow might check 15 flags—payment methods, shipping options, promotional banners. If each check takes 50ms network round-trip, you’ve added 750ms latency. Production systems evaluate flags in microseconds using in-memory caches with background updates.

The evaluation engine processes complex rules:

“Enable for 10% of users in California using iOS” or “Show to premium subscribers who signed up after Jan 2024.” This requires maintaining user context (location, device, account type) and deterministic hashing to ensure the same user always sees the same experience. A user shouldn’t see Feature A on page load but not on refresh—that’s jarring and breaks analytics.