Real-Time Ad Bidding Systems (RTB): Designing for <100ms Responses

The Millisecond Economy

Every time you load a webpage, an invisible auction happens at speeds that make stock exchanges look slow. Multiple advertisers compete to show you their ad in under 100 milliseconds—faster than you can blink. Miss that deadline by even 50ms, and your bid is worthless. The ad slot goes to someone else, and you’ve wasted compute cycles on a bid that arrived too late.

This isn’t theoretical latency—it’s the real-time bidding (RTB) ecosystem where billions of auctions happen daily, each demanding sub-100ms end-to-end response times. Google’s AdX, Amazon’s AAP, and The Trade Desk process over 10 million bid requests per second at peak, with latency budgets measured in single-digit milliseconds for each hop.

How RTB Systems Achieve Sub-100ms Responses

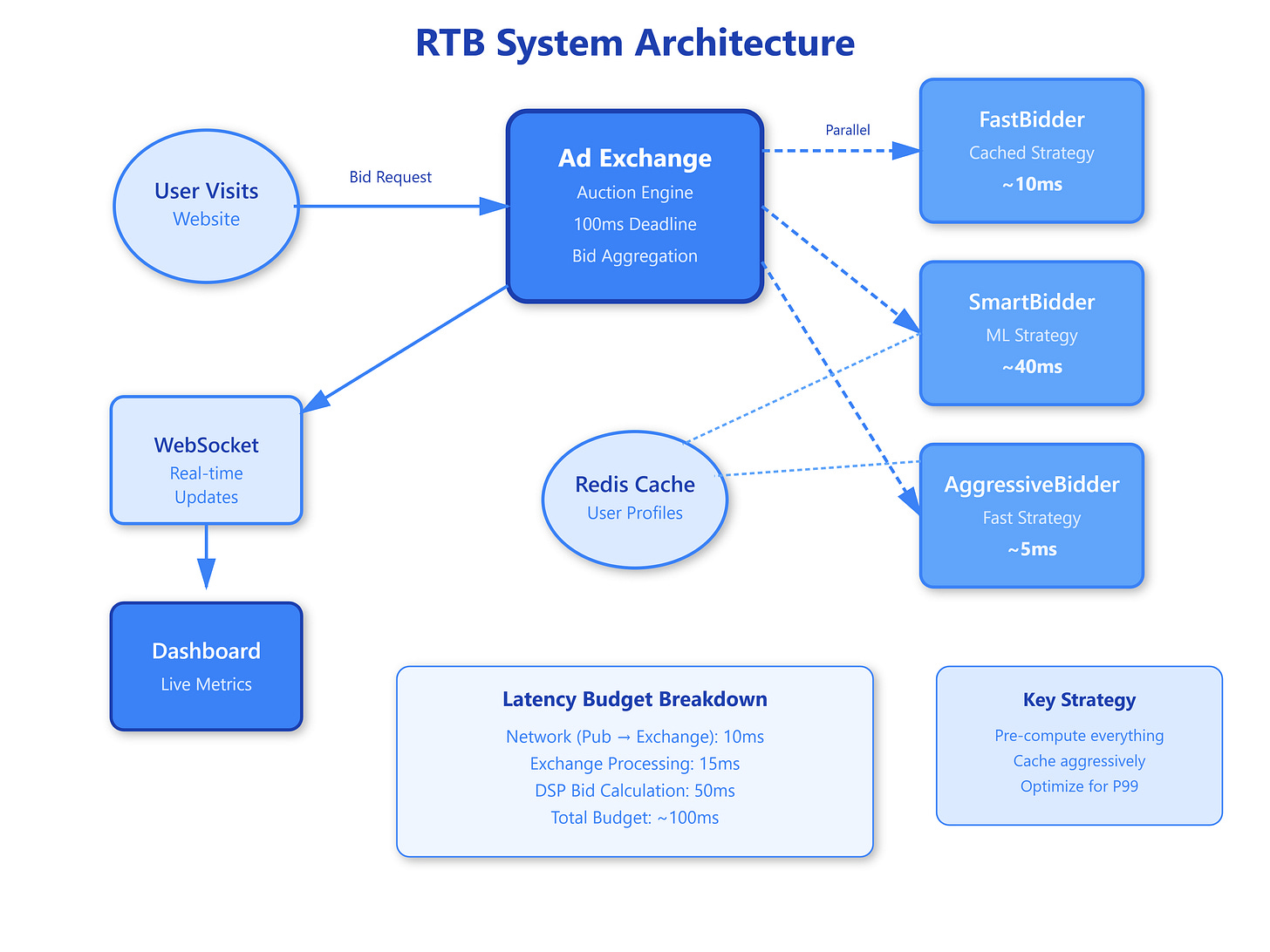

Real-time bidding operates on a strict request-response protocol. When a user visits a website, the publisher’s ad server sends a bid request to an ad exchange. The exchange simultaneously broadcasts this to hundreds of demand-side platforms (DSPs), which must evaluate the impression, calculate bid prices, and respond—all within 80-120ms total budget.

The latency budget breakdown looks roughly like this: 10ms for network transmission from publisher to exchange, 15ms for exchange processing and fanout to DSPs, 50ms for DSP bid calculation, 15ms for response aggregation and auction logic, and 10ms for network return to publisher. That 50ms window for DSPs is where the real engineering challenge lives.

Successful RTB systems use aggressive pre-computation to meet these deadlines. Instead of calculating everything on the fly, they maintain hot caches of user profiles, campaign targeting rules, and bid multipliers. When a request arrives, the system performs quick lookups rather than complex computations. A DSP might pre-compute “users in California interested in hiking equipment” and store bid prices per user segment, then do simple hash lookups during the actual bid request.

The architecture typically involves multiple layers of caching: L1 in-memory caches for the hottest data (recent users, active campaigns), L2 distributed caches like Redis for broader targeting rules, and occasional database hits for cold data with strict timeout controls. If a database query takes more than 5ms, it’s often better to skip that signal entirely than risk missing the response deadline.