Real-Time Analytics Architecture: Processing Millions of Events Per Second

Introduction

Imagine you’re scrolling through Twitter during a major sporting event. Trending topics update every second. Live view counts climb in real-time. Engagement metrics refresh instantly. Behind this seamless experience lies a sophisticated real-time analytics architecture processing millions of events per second, aggregating them on-the-fly, and delivering insights with sub-second latency. Building such systems requires understanding stream processing, windowing techniques, and the delicate balance between accuracy and speed.

Understanding Real-Time Analytics

Real-time analytics architectures process unbounded streams of events as they arrive, computing aggregations, detecting patterns, and triggering actions within milliseconds. Unlike batch processing which operates on bounded datasets, stream processing treats data as continuous flows that never end.

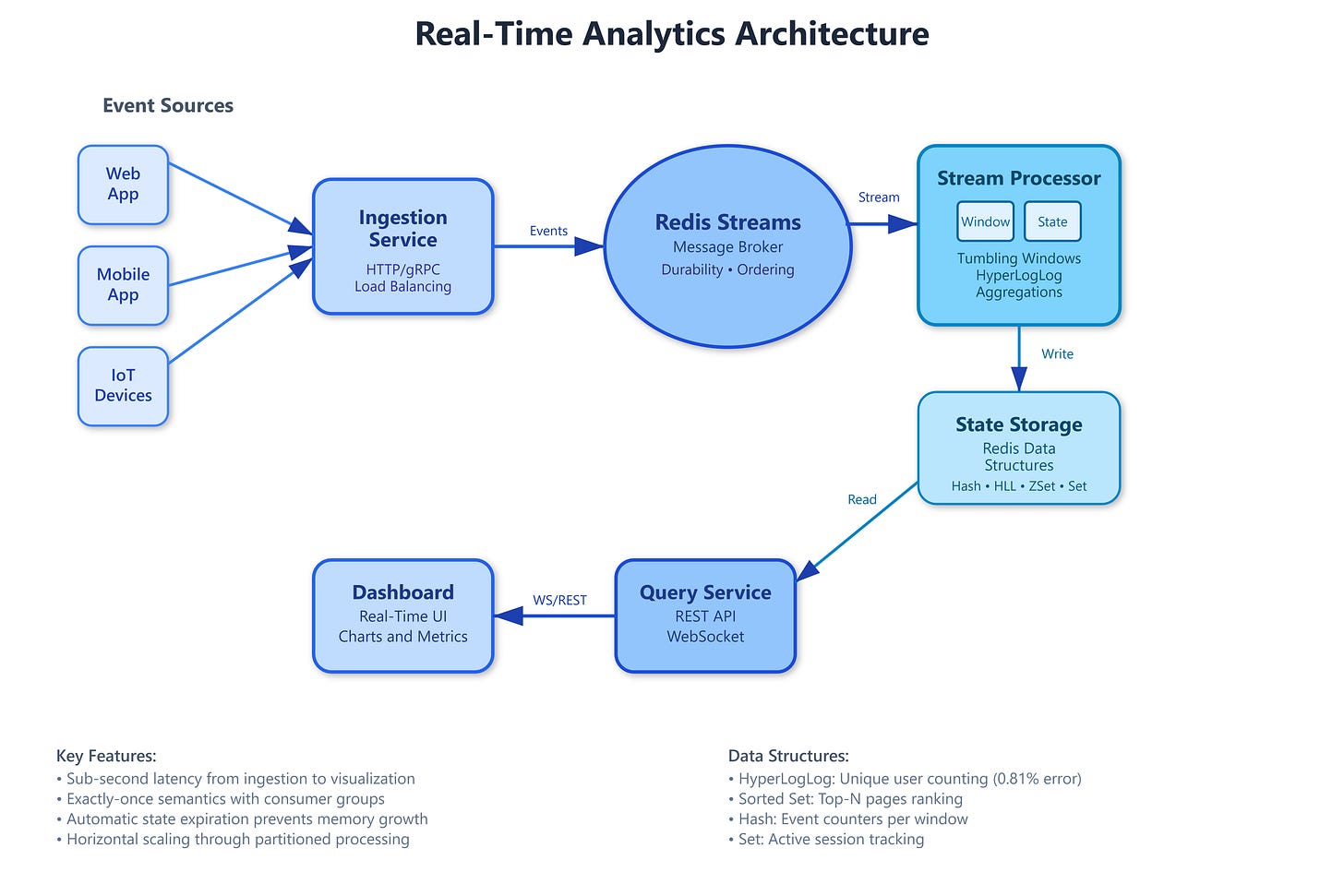

The core mechanism involves three stages: ingestion, processing, and serving. Events flow into a message broker (Kafka, Pulsar, or Redis Streams) which provides durability and ordering guarantees. Stream processors consume these events, maintaining state in memory or fast storage like RocksDB. They apply transformations, perform aggregations over time windows, and emit results to downstream systems. Query layers serve pre-computed metrics from materialized views, enabling instant retrieval without scanning raw events.

Time windows are fundamental to stream analytics. A tumbling window divides the stream into fixed, non-overlapping intervals—think “clicks per minute.” Sliding windows overlap, recomputing results as new events arrive—”clicks in the last 60 seconds, updated every second.” Session windows group events by activity bursts, closing after periods of inactivity. Each window type trades off between latency, accuracy, and computational cost.

State management separates good from great implementations. As windows advance, processors must maintain intermediate results—counters, sets, sketches—in fault-tolerant storage. When a node crashes mid-computation, the system must resume from a consistent checkpoint without double-counting events or losing progress. Kafka’s exactly-once semantics, combined with periodic state snapshots, ensures correctness even during failures.

The Lambda Architecture addresses a critical challenge: stream processing sacrifices accuracy for speed through approximations like HyperLogLog for distinct counts or Count-Min Sketch for frequency estimation. To provide precise results, it runs a parallel batch pipeline that reprocesses complete datasets nightly, correcting streaming approximations. Modern Kappa Architecture eliminates batch layers by using replayed streams for recomputation, simplifying operations.