Real-Time Processing Systems: Architecture Patterns

When you click “Buy Now” on an e-commerce site and see your inventory update instantly, or watch a live sports score refresh without hitting reload, you’re experiencing real-time processing. But here’s what most engineers miss: real-time doesn’t mean “instantaneous”—it means predictable latency within defined bounds.

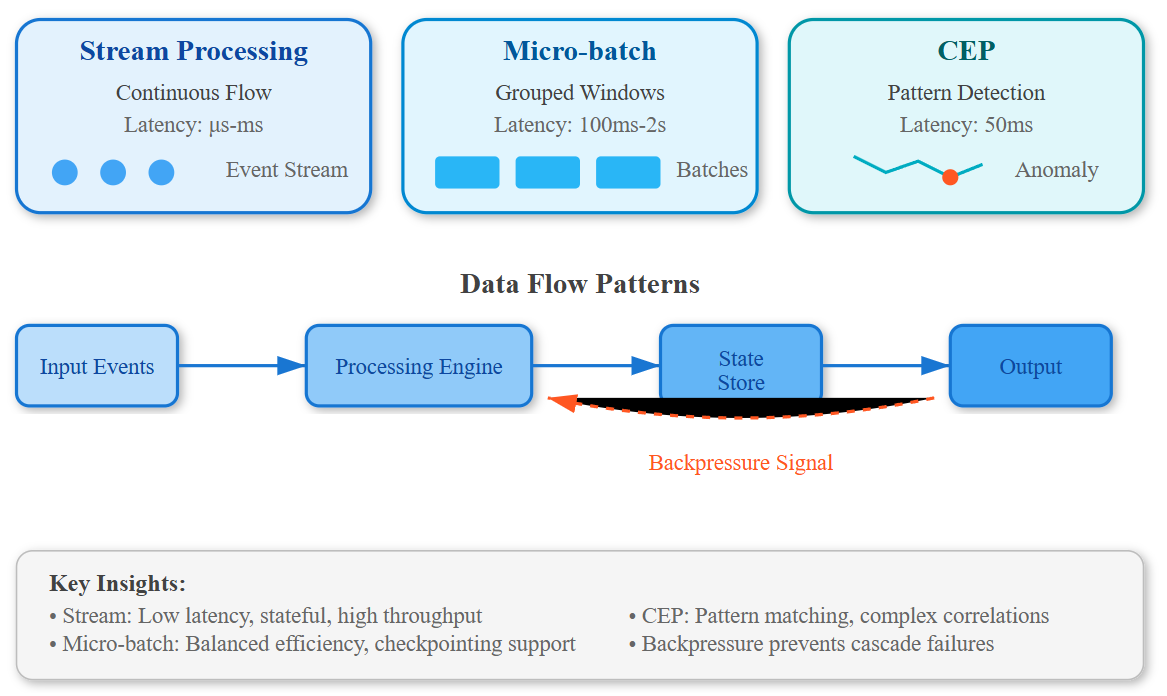

The Three Processing Paradigms

Real-time systems operate on three distinct patterns, each solving different latency problems:

Stream Processing handles continuous data flows with microsecond-to-millisecond latency. Think Kafka Streams or Apache Flink processing click events as they arrive. The critical insight: stream processors maintain state in memory, trading consistency for speed. Netflix uses this for recommendation updates—your viewing history influences suggestions within 200ms.

Micro-batch Processing groups events into tiny windows (100ms-2s) before processing. Spark Streaming pioneered this. The hidden advantage? Batching enables efficient checkpointing without sacrificing perceived real-time behavior. Uber’s surge pricing recalculates every few seconds using micro-batches—fast enough to feel instant, efficient enough to handle millions of riders.

Complex Event Processing (CEP) detects patterns across event streams in real-time. This is where fraud detection lives. When your credit card gets blocked mid-transaction, a CEP engine just matched your spending pattern against known fraud signatures in under 50ms.