Serverless Architecture Patterns Beyond Functions

When Functions Aren’t Enough

You’ve built a serverless API using Lambda functions. It handles 10,000 requests per second beautifully. Then your product team announces a new feature: video transcoding. A single Lambda times out after 15 minutes, and your 4K videos need 45 minutes. You realize serverless doesn’t mean “only functions”—it means abstracting infrastructure. The real patterns emerge when you combine multiple serverless primitives into cohesive architectures that handle workloads functions alone cannot.

The Serverless Spectrum: Beyond FaaS

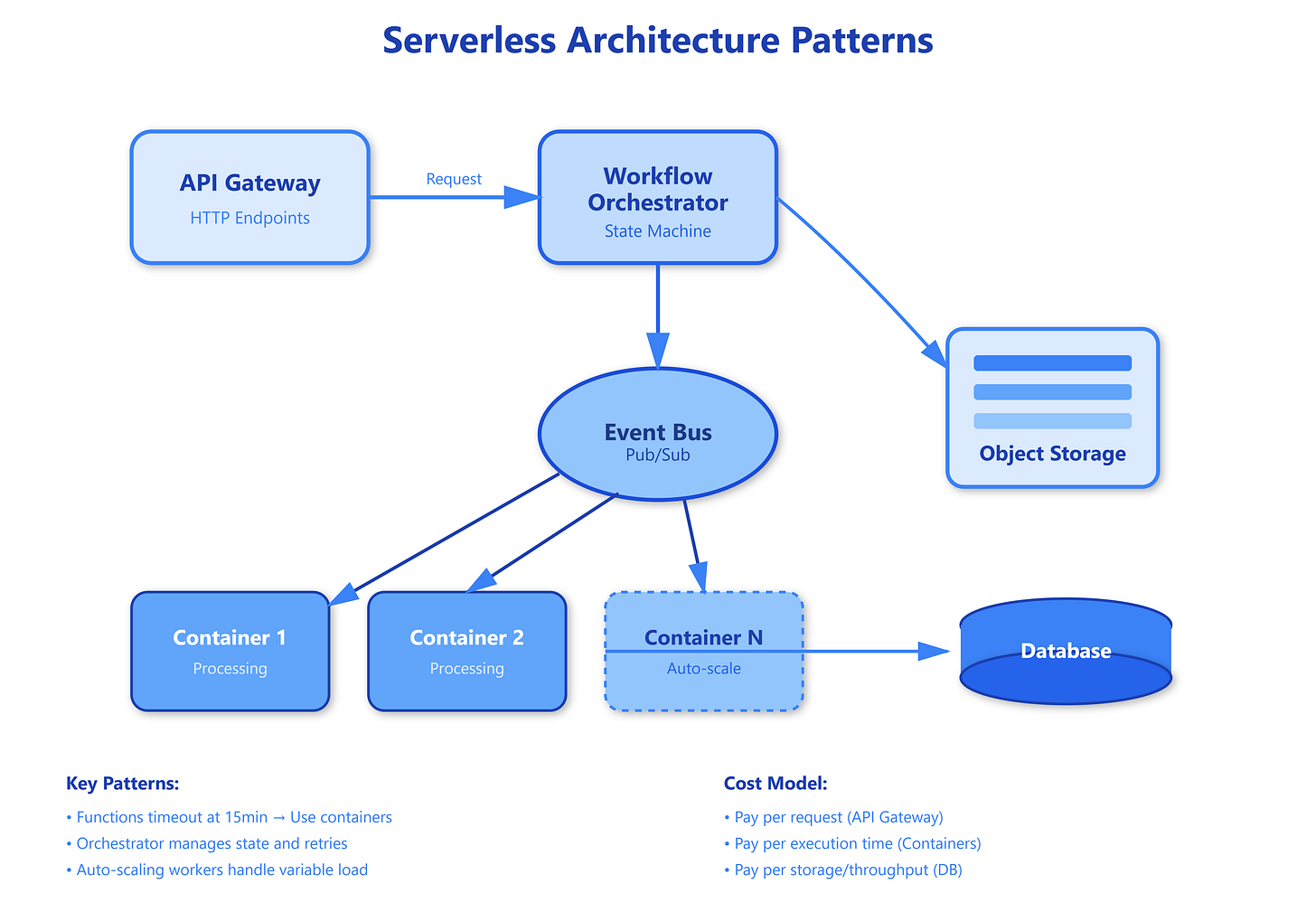

Traditional serverless discussions center on FaaS (Function-as-a-Service), but production systems require five distinct serverless patterns working in concert. Serverless containers provide long-running compute without managing clusters—AWS Fargate runs containers for hours, Google Cloud Run auto-scales to zero but handles any workload Docker can package. This solves the timeout problem: your transcoding job runs in a container that terminates when done, charging only for actual usage.

Workflow orchestration connects these pieces. AWS Step Functions or Azure Durable Functions coordinate multi-step processes as code, handling retries, parallel execution, and human approval steps. A video processing pipeline might trigger a container, wait for completion, invoke a function to update metadata, then send notifications—all defined as a state machine that recovers from failures at any step.

Event-driven storage and databases complete the picture. S3 events trigger processing when files arrive. DynamoDB Streams capture data changes as events. Aurora Serverless automatically scales database capacity to match load, sleeping during idle periods. These aren’t just managed services—they’re primitives that emit events and scale without capacity planning.

The critical shift is architectural: instead of “function that does everything,” think “event flows through specialized components.” Your video upload triggers an S3 event, which starts a Step Function workflow, which launches a Fargate task for transcoding, which writes results to DynamoDB, which streams changes to a Lambda for notifications. Each component handles what it does best, scaling independently.

API composition ties these patterns to users. API Gateway doesn’t just route to functions—it transforms requests, validates schemas, caches responses, and throttles clients. When combined with AppSync for GraphQL or EventBridge for event routing, you build sophisticated APIs where backend implementation is completely decoupled from interface contracts.

Critical Insights: What Enterprise Systems Reveal

Common Knowledge: Serverless containers bridge the gap between functions and traditional compute. They eliminate cluster management while supporting any runtime, language, or library. Most teams discover this when Lambda’s 15-minute limit or 10GB memory cap becomes a blocker.

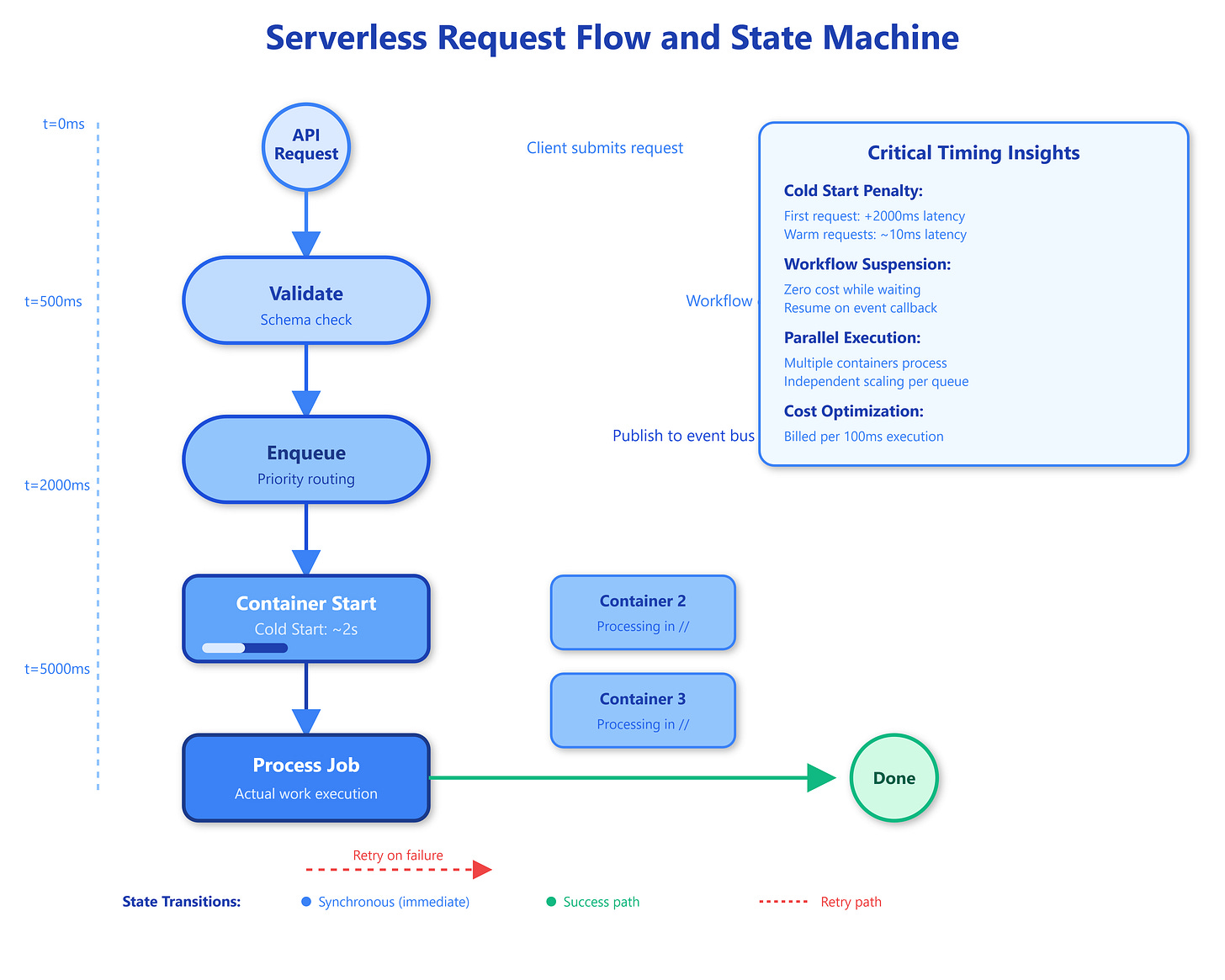

Rare Knowledge: Cold starts compound across patterns. A Step Function invoking a Fargate task suffers two cold starts—workflow initialization plus container launch. Netflix solved this by maintaining “warm pools” of containers for latency-sensitive workflows, defeating the serverless promise but meeting SLA requirements. The trade-off: predictable performance costs more than pure pay-per-use.

Advanced Insight: Event ordering breaks in distributed serverless systems. DynamoDB Streams guarantee order per partition key, but Step Functions fan-out operations don’t guarantee child execution sequence. At scale, “process items 1, 2, 3” might execute as “2, 1, 3.” Stripe’s payment processing handles this by making operations idempotent and using event timestamps rather than arrival order.

Strategic Impact: Cost optimization requires rethinking “servers.” A function handling 10 million 50ms requests monthly costs $370 on Lambda but $31 on Cloud Run (with proper scaling configuration). The difference? Containers amortize cold starts across requests, while functions pay per invocation. Uber moved batch processing from Lambda to Fargate, reducing costs 60% despite longer runtime.

Implementation Nuance: State machines expose retry policies most developers misuse. Default retries use exponential backoff with jitter, but workflows with tight time bounds need custom retry logic. A video encoding workflow might retry API failures aggressively (10 times, 1-second intervals) but encoding failures gracefully (3 times, 5-minute intervals), reflecting different failure modes.

Observability Complexity: Distributed tracing becomes mandatory, not optional. A single user request might touch API Gateway, Lambda, Step Functions, Fargate, DynamoDB, and EventBridge. Without correlation IDs propagated across every hop, debugging is impossible. AWS X-Ray and OpenTelemetry solve this, but require instrumentation at every service boundary—even S3 events need context injection.

Real-World Implementations

GitHub Link

https://github.com/sysdr/sdir/tree/main/Serverless_Architecture_Patterns_Beyond_FunctionsNetflix’s Encoding Pipeline processes millions of video files daily using Step Functions orchestrating Fargate tasks. Their workflow handles 12 quality variants per video, with parallel encoding jobs scaling to thousands of containers during peak hours. They discovered that spot instances (up to 90% cheaper) work perfectly for encoding—interrupted containers simply restart from checkpoints stored in S3.

Stripe’s Payment Processing uses Lambda for API endpoints but routes complex payment flows through Step Functions. A cross-border payment might wait hours for bank confirmation—far beyond Lambda’s timeout. Their workflow suspends on “wait for callback” states, consuming zero resources during idle periods but resuming instantly when webhooks arrive.

Airbnb’s Search Infrastructure combines API Gateway caching with Lambda@Edge for geographic distribution. Popular searches return cached results from 200+ edge locations in under 20ms. When cache misses occur, Lambda@Edge fetches from DynamoDB Global Tables—fully serverless, globally distributed, and automatically scaled. Their architecture serves 500,000 searches/second without managing a single server.

Architectural Considerations

Choose FaaS (Lambda) for request-response workloads under 15 minutes with standard runtimes. Use serverless containers (Fargate, Cloud Run) for batch jobs, custom runtimes, or when you need GPUs. Workflow orchestration becomes essential when processes span multiple services or require human approval.

Monitor costs obsessively—serverless amplifies inefficiency. A poorly designed Step Function might invoke Lambda 100 times where one Fargate task suffices. Use DynamoDB on-demand mode for unpredictable traffic but provisioned capacity for steady loads. Aurora Serverless shines for development environments (auto-pauses overnight) but struggles with spiky production traffic due to scaling delays.

Serverless excels at event-driven architectures but fights synchronous request chains. If service A calls B calls C synchronously, each pays for idle waiting time. Instead, use EventBridge or SNS/SQS to decouple—A publishes events, B and C process asynchronously. This pattern reduces costs and increases resilience.

Practical Takeaway

Master serverless by implementing a multi-pattern system. Run bash setup.sh to deploy a video processing pipeline demonstrating all five patterns: API Gateway receives uploads, triggers a Step Function workflow, which launches a container for processing, writes to a database, and publishes completion events. The dashboard shows cold starts, workflow states, and cost implications in real-time.

Extend this demo by adding error scenarios—kill a container mid-processing and watch the workflow retry. Add a second workflow branch for thumbnail generation to observe parallel execution. Implement a circuit breaker that falls back to synchronous processing when async queues exceed thresholds. These exercises reveal how serverless patterns compose into resilient, cost-effective systems that scale from zero to millions without infrastructure management.

The future of serverless isn’t “no servers”—it’s “no infrastructure decisions.” You choose patterns (functions, containers, workflows) based on workload characteristics, and the cloud provider handles provisioning, scaling, and reliability. Understanding when to apply each pattern transforms serverless from a cost center into a strategic advantage.