The “Hot Key” Crisis in Consistent Hashing: When Virtual Nodes Fail You

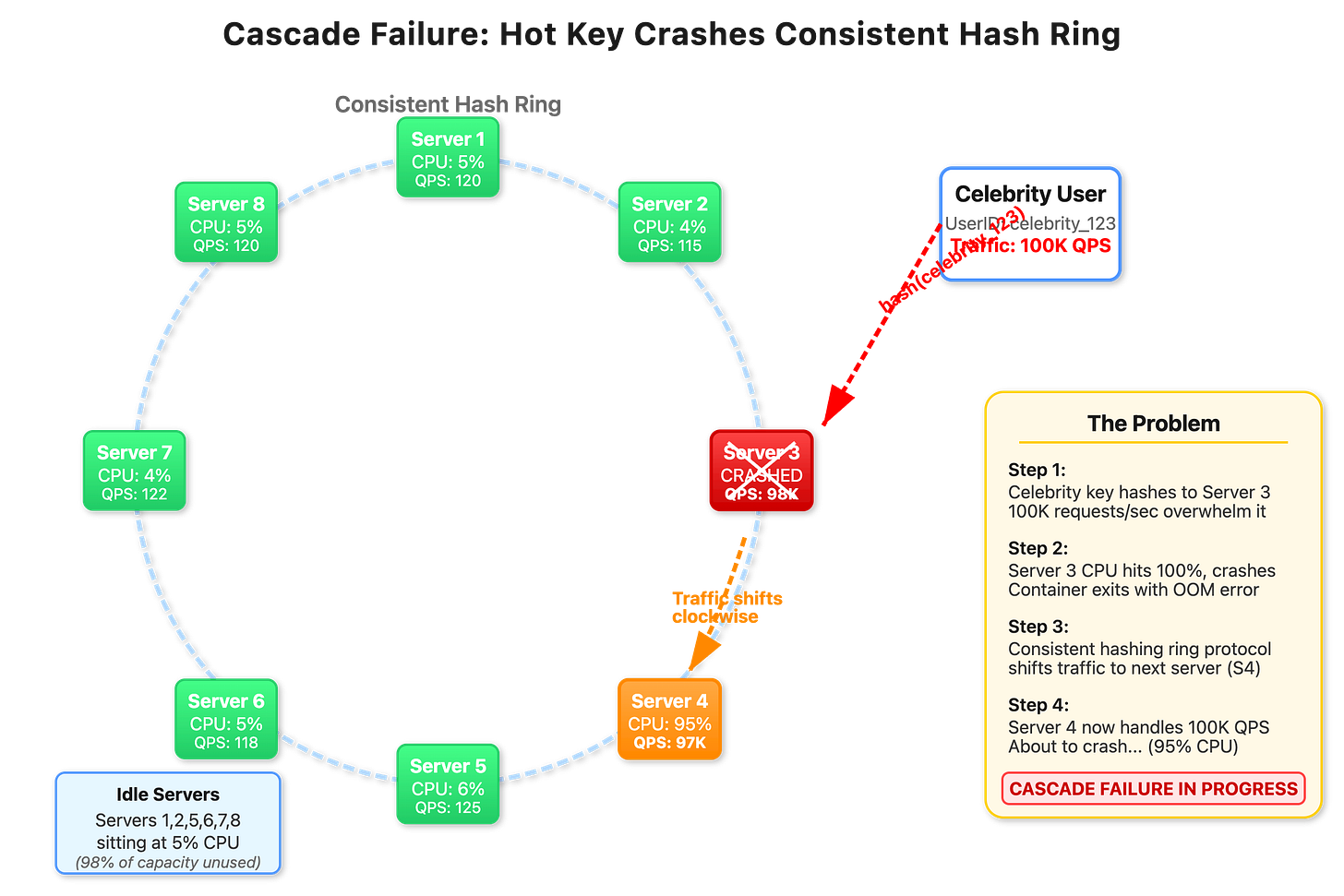

You have architected a distributed rate-limiter or websocket cluster using Consistent Hashing. User IDs map to specific servers, giving you cache locality and deterministic routing. Everything works perfectly until a “Celebrity” (or a rogue AI Agent) with millions of followers joins the platform.

Their assigned server hits 100% CPU and crashes. The hash ring shifts that traffic to the next server—which immediately crashes too. Within minutes, you have lost three servers to a Cascading Failure, while the other 95 servers sit idle at 5% CPU.

This is not a “Virtual Node” problem. It is an Access Skew problem, and most engineers attempt to solve it with the wrong tool.

Data Skew vs. Access Skew

The most common mistake in system design interviews is suggesting “Add more Virtual Nodes” to fix a hot key.

Virtual Nodes solve Data Skew: They ensure that if you have 1,000 keys, they are spread evenly across servers.

Nothing solves Access Skew in a pure hash ring: If “User A” generates 100,000 requests per second, and “User A” hashes to

Server 1, that server will melt. Virtual nodes just move the melting point to a different server.

Key Insight: Virtual nodes partition the keyspace, not the traffic volume of individual keys.

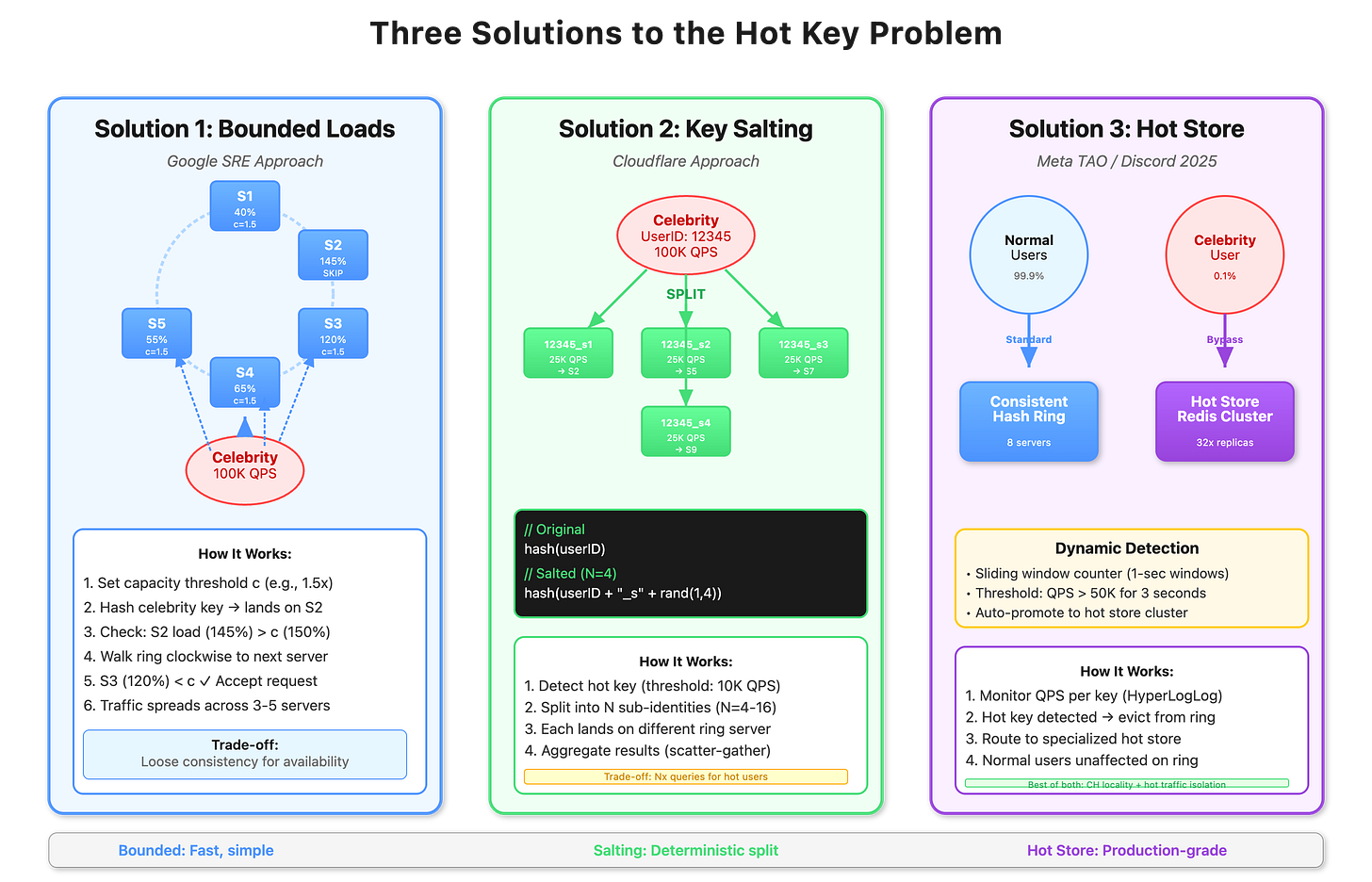

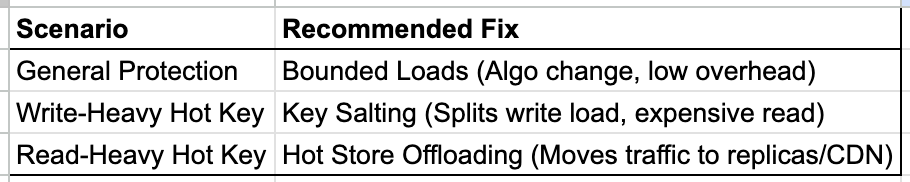

Solution A: Consistent Hashing with Bounded Loads

Best for: Latency-sensitive services where you want to preserve cache locality for 99% of users but prevent crashes for the 1%.

This approach, pioneered by Google and Vimeo, adds a “Safety Valve” to the hashing algorithm.

Assign a generic capacity $C$ to every server (e.g., $C = 1.25 \times \text{Average Load}$).

Compute the target server for a request.

Check: Is

Current_Load(Target)> $C$?If No: Route normally.

If Yes: “Fall through” to the next server in the ring.

The Math:

With a capacity factor $C=1.5$, a “Hot Key” generating $10\times$ normal traffic will spill over and distribute itself across roughly 7 servers sequentially.

Trade-off: You lose “Strict Consistency” (the hot user is no longer on a single server), but you gain Availability.

Solution B: Key Salting & Scatter-Gather

Best for: Read-heavy analytics or high-throughput writes where data aggregation is acceptable.

When a key is identified as “Hot” (e.g., > 10k QPS), we fundamentally change the hashing contract. We stop hashing UserID. Instead, we hash a “Salted” version of the ID to splinter the traffic.

$$\text{Target} = \text{Hash}(\text{UserID} + \text{Random}(0, N))$$

The Mechanism: A single “Justin Bieber” ID is logically split into

Bieber_1,Bieber_2...Bieber_N.The Result: Traffic is forced to spread across $N$ distinct servers.

The Cost: To read the data back, you must query all $N$ potential servers (Scatter-Gather) and aggregate the results. This increases read latency but allows infinite write scalability.

Solution C: Dynamic “Hot Store” Promotion

Best for: Social Networks and Chat Apps (Read Heavy).

In 2026, systems like Discord and Meta don’t just “handle” hot keys on the main ring; they evict them.

Detection: A “Heavy Hitter” detector (using Count-Min Sketch or eBPF) identifies a key exceeding a velocity threshold (e.g., 50k req/sec).

Promotion: The system updates the routing metadata: “User A is now HOT.”

Redirection: The Load Balancer sees the flag and routes “User A” bypassing the Consistent Hash Ring entirely.

Destination: Traffic goes to a dedicated “Hot Tier”—typically a massively replicated Redis cluster or a CDN.

Deep Dive: Detection via eBPF

Older systems waited for the application to count requests, which is often too slow (the app crashes before it can count the spike).

Modern 2026 architectures use eBPF (Extended Berkeley Packet Filter) in the kernel network stack.

How it works: The kernel inspects TCP packets before they hit the application user space.

Action: If the kernel sees 10,000 packets for

user_id=8821in 100ms, it tags the packet headers with ahot_keyflag.Reaction: The Load Balancer sees the tag and immediately reroutes or rate-limits without the application server ever parsing the request.

Summary Verdict for Engineers

You cannot abandon Consistent Hashing without losing cache locality. The elegance of the system is deterministic routing. However, you must build Escape Hatches for the outliers.

Code Demo

https://github.com/sysdr/sdir/tree/main/hot-key/hotkey-demo